New hardware and software systems for condition monitoring and predictive maintenance promise to reduce downtime, human error and cost by improving early detection and automating maintenance procedures. But for many users, obstacles remain to achieving this outcome:

- Equipment assets are not sufficiently instrumented.

- Field instruments are not connected to automation networks.

- Automation networks are divided into multiple data silos by communication and information technology (IT) restrictions.

- Automation networks and business networks are not integrated.

The underlying issue behind many of these obstacles is the inherent complexity of the traditional control system architecture. But given the significance of remote monitoring to enable other Industry 4.0 goals, automation vendors are bringing new technologies to bear on the problem. This article discusses the applications of one technology, MQTT (formerly MQ Telemetry Transport or Message Queuing Telemetry Transport), to simplify the process of integrating equipment input/output (I/O) for remote monitoring.

Understanding the Roots

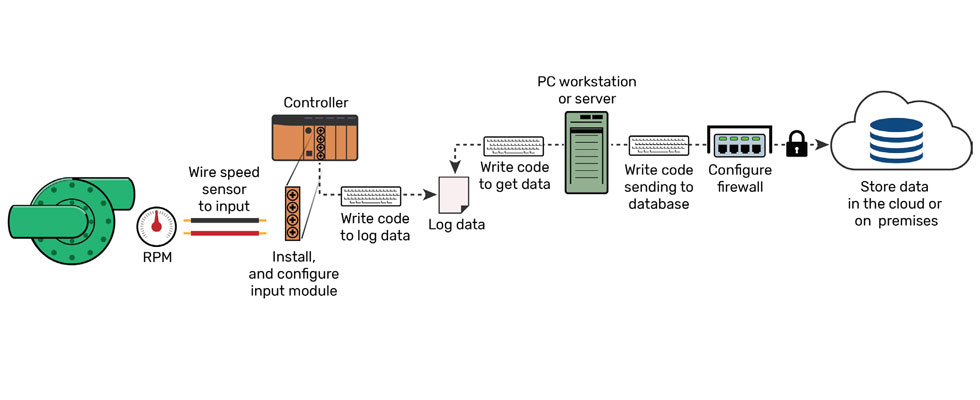

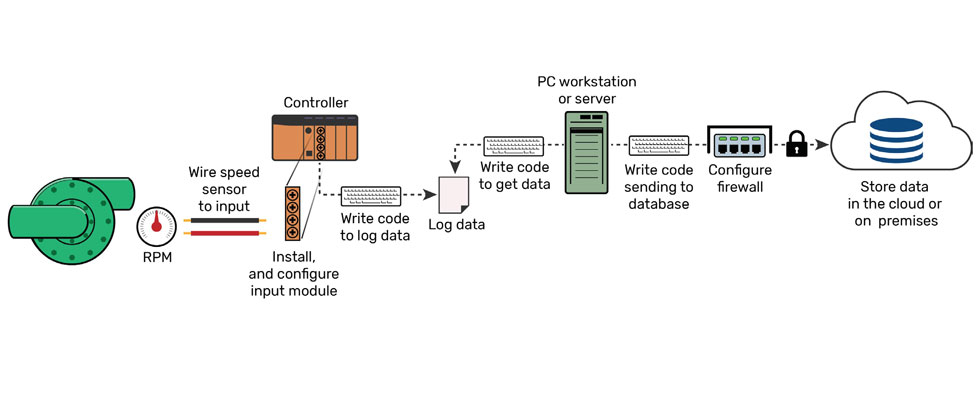

The complexity of automation technology becomes apparent when a technician or engineer want to bring another signal into the system (Image 1). First, someone must identify the right I/O module type to use. Then someone (probably a different person, depending on the size of the facility) has to install the appropriate enclosure and wiring. Likely, that I/O module also belongs to a controller or gateway that then needs to be configured to scan the I/O and log data. But more than likely, that controller cannot send I/O data to its ultimate destination, so an open platform communication (OPC) server or some other middleware has to be configured to scan the controller. Maybe there is a supervisory control and data acquisition (SCADA) system that also scans the controller, but a different piece of software is probably required to access, format, filter and prepare that data before it can be added to a database or analytics platform somewhere. How many people does it take to put the whole puzzle together, and what does that do to a project’s timeline and budget?

This complexity is the result of legacy operations technologies (OT) that were not designed to support the data-heavy tasks users want to perform today. The traditional control system architecture relies on point-to-point connections designed only to move data from slave devices to a master controller or application. The network master usually obtains fresh data by polling each device in turn, to which each device responds, whether its data has changed since the last request or not.

And because security was not a concern when these devices and protocols were designed, IT personnel need to take measures to secure data after the fact with a plethora of firewall rules or network segmentation that further complicate data acquisition.

MQTT Provides an Alternative

MQTT is a communications protocol that has risen in popularity in recent years, riding on the wave of interest in the industrial internet of things (IIoT). However, the roots of its development reach back further.

In the 1990s, ConocoPhillips (now Phillips 66) was looking for a way to improve telemetry reporting over their low-bandwidth, dial-up and costly very small aperture terminal (VSAT, small satellite dish) SCADA network. IBM partnered with system integrator Arcom Control Systems (now Cirrus Link Solutions) to develop a minimalist communication protocol that could handle intermittent network outages and high latency among many distributed devices over the transmission control protocol/internet protocol (TCP/IP).

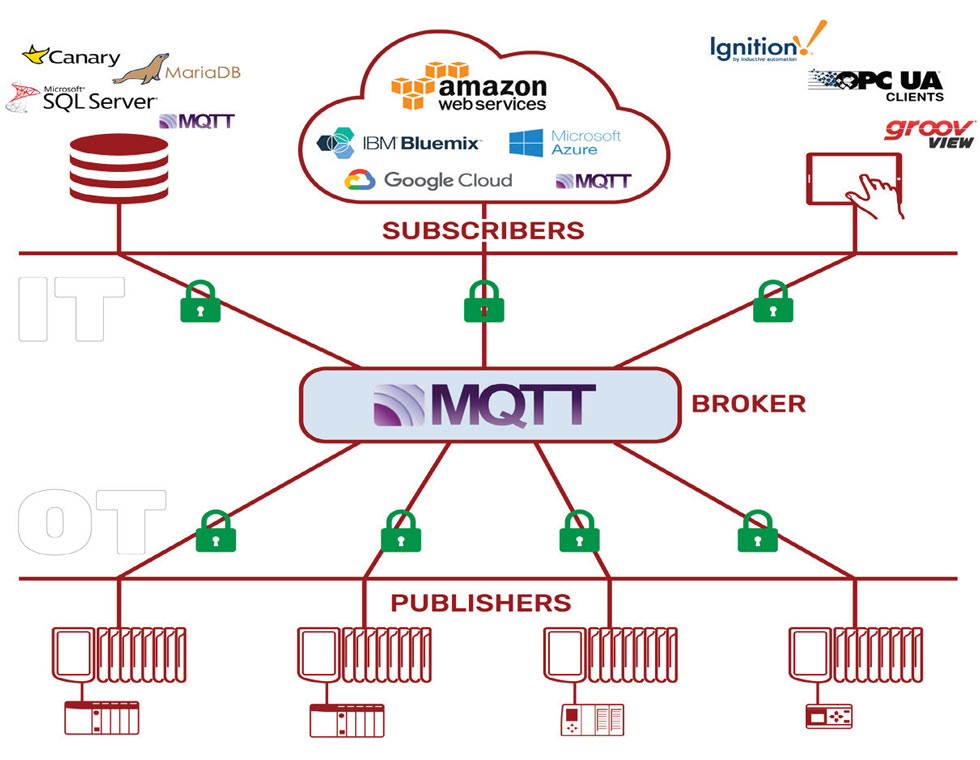

IBM abandoned the traditional poll-response communication model in favor of a brokered publish-subscribe model. In that scheme, a central server (the broker) serves as the communication hub for the entire network (Image 2). Field devices publish data to the broker only when they detect a change in a monitored value. Rather than requesting updates from each device, the broker’s job is to route published field data to any interested subscribers, which can be software applications or other field devices.

These changes eliminate most of the usual back-and-forth traffic required to obtain accurate data. Combined with MQTT’s efficient data format, this results in an 80 to 95 percent reduction in overall bandwidth consumption, according to Cirrus Link. But the decoupled nature of MQTT data exchange holds the most promise for creating a more efficient control system architecture.

In an MQTT network, a field device or gateway only needs to know about one endpoint, the broker, but the broker can share data anywhere. For instance, MQTT is gaining support in traditional industrial software, like SCADA applications. MQTT is also the native protocol of every major cloud IoT platform. That means that I/O data can flow from the field to a local maintenance database or SCADA host and also to the cloud without building new connections between devices and applications. Any application that wants access to field data simply has to be pointed to the common MQTT broker. Since MQTT uses device-originating connections and takes advantage of the Secure Sockets Layer and Transport Layer Security (SSL/TLS) encryption already built into the TCP/IP stack, secure site-to-site communication over public networks is also possible without imposing restrictive firewall rules.

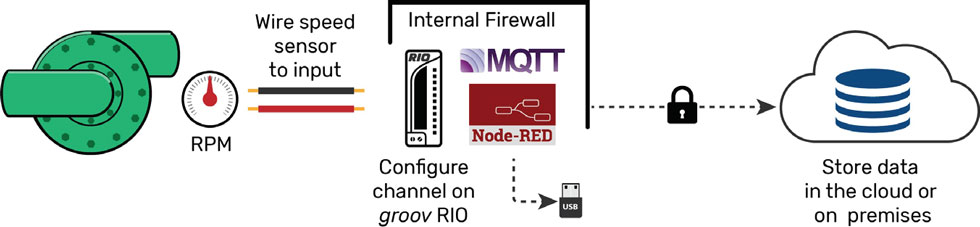

This architecture would not be complete without device support in the field (Image 3). Fortunately, vendors are responding to the demand for an alternative approach and embedding support for MQTT into new sensors and field devices. For legacy equipment, new controller and I/O gateway options are also available that make MQTT connectivity possible without taking a rip-and-replace approach—still bypassing many of the layers usually required. New options in edge computing make this even more appealing, since they enable much of the necessary data processing to happen in the field, improving local responsiveness and further reducing network demand.

Scalable remote monitoring is difficult to achieve due to the complexities introduced by traditional industrial communication protocols and the lack of connectivity in legacy control devices. This results in the common control system architecture consisting of many hardware and software layers between field I/O and the applications used for operations.

The MQTT communication protocol provides a path to a simpler industrial architecture that breaks down data silos, increases security, and reduces time and personnel required to achieve data integration. When paired with new edge computing devices, it is possible to bypass the traditional technology stack and send equipment data directly to on-premises and cloud-based databases and analytics platforms. Brownfield sites can leverage the same architecture to integrate legacy equipment without adopting a rip-and-replace approach.